DeepSeek V3.1 didn’t arrive with flashy press releases or an enormous marketing campaign. It simply confirmed up on Hugging Face, and inside hours, folks seen. With 685 billion parameters and a context window that may stretch to 128k tokens, it’s not simply an incremental replace. It appears like a significant second for open-source AI. This text will go over DeepSeek V3.1 key options, capabilities, and a hands-on to get you began.

What precisely is DeepSeek V3.1?

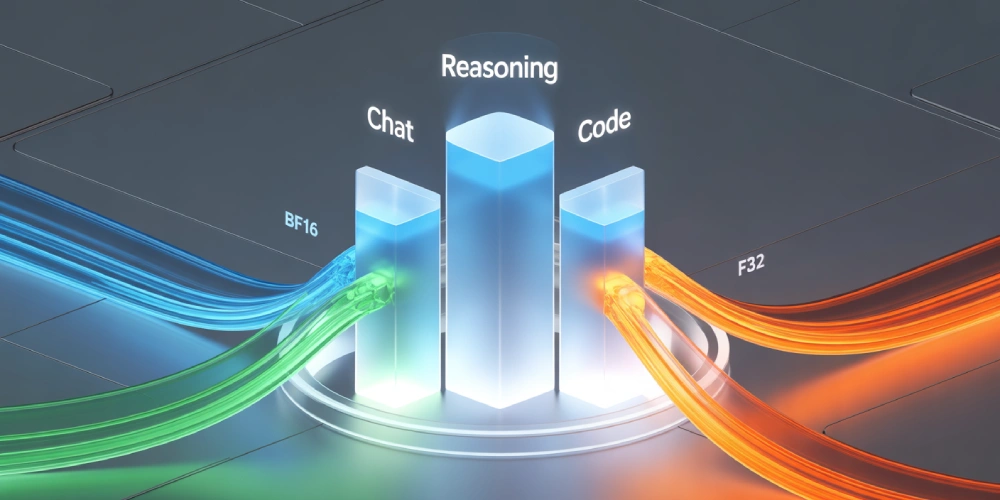

DeepSeek V3.1 is the most recent member of the V3 household. In comparison with the sooner 671B model, V3.1 is barely bigger, however extra importantly, it’s extra versatile. The mannequin helps a number of precision codecs—BF16, FP8, F32—so you may adapt it to no matter compute you could have available.

It isn’t nearly uncooked measurement, although. V3.1 blends conversational potential, reasoning, and code technology into one unified mannequin or a Hybrid mannequin. That’s a giant deal! Earlier generations typically felt like they had been good at one factor however common at others. Right here, all the pieces is built-in.

Entry DeepSeek V3.1

There are a number of alternative ways to entry DeepSeek V3.1:

- Official Net App: Head to deepseek.com and use the browser chat. V3.1 is already the default there, so that you don’t must configure something.

- API Entry: Builders can name the deepseek-chat (common use) or deepseek-reasoner (reasoning mode) endpoints by means of the official API. The interface is OpenAI-compatible, so in case you’ve used OpenAI’s SDKs, the workflow feels the identical.

- Hugging Face: The uncooked weights for V3.1 are revealed beneath an open license. You possibly can obtain them from the DeepSeek Hugging Face web page and run them regionally in case you have the {hardware}.

In case you simply need to chat with it, the web site is the quickest route. If you wish to fine-tune, benchmark, or combine into your instruments, seize the API or Hugging Face weights. The hands-on of this text is completed on the Net App.

How is it completely different from DeepSeek V3?

DeepSeek V3.1 brings a set of necessary upgrades in comparison with earlier releases:

- Hybrid mannequin with pondering mode: Provides a toggleable reasoning layer that strengthens problem-solving whereas aiming to keep away from the standard efficiency drop of hybrids.

- Native search token assist: Improves retrieval and search duties, although neighborhood exams present the characteristic prompts very often. A correct toggle continues to be anticipated within the official documentation.

- Stronger programming capabilities: Benchmarks place V3.1 on the high of open-weight coding fashions, confirming its edge in software-related duties.

- Unchanged context size: The 128k-token window stays the identical as in V3-Base, so you continue to get novel-length context capability.

Taken collectively, these updates make V3.1 not only a scale-up, however a refinement.

Why persons are paying consideration

Listed here are among the standout options of DeepSeek V3.1:

- Context window: 128k tokens. That’s the size of a full-length novel or a whole analysis report in a single shot.

- Precision flexibility: Runs in BF16, FP8, or F32 relying in your {hardware} and efficiency wants.

- Hybrid design: One mannequin that may chat, purpose, and code with out breaking context.

- Benchmark outcomes: Scored 71.6% on the Aider coding benchmark, edging previous Claude Opus 4.

- Effectivity: Performs at a stage the place some opponents would price 60–70 occasions extra to run the identical exams.

- Open-Supply: In all probability the one open supply mannequin that’s maintaining with the closed supply releases.

Attempting it out

Now we’d be testing DeepSeek V3.1 capabilities, utilizing the net interface:

1. Lengthy doc summarization

A Room with a View by E.M. Forster was used because the enter for the next immediate. The guide is over 60k phrases in size. Yow will discover the contents of the guide at Gutenberg.

Immediate: “Summarize the important thing factors in a structured define.”

Response:

2. Step-by-step reasoning

Immediate: “Step-by-step reasoning

Work by means of this puzzle step-by-step. Present all calculations and intermediate occasions right here. Hold models constant. Don’t skip steps. Double-check outcomes with a fast examine on the finish of the assume block.

A prepare leaves Station A at 08:00 towards Station B. The gap between A and B is 410 km.

Prepare A:

- Cruising velocity: 80 km/h

- Scheduled cease: 10 minutes at Station C, situated 150 km from A

- Monitor work zone: from the 220 km marker to the 240 km marker measured from A, velocity restricted to 40 km/h in that 20 km phase

- Outdoors the work zone, run on the cruising velocity

.

. (Some elements omitted for brevity; Full model will be seen within the following video)

.

Reply format (outdoors the assume block solely):

- Meet: [HH:MM], [distance from A in km, one decimal]

- Movement till meet: Prepare A [minutes]Prepare B [minutes]

- Remaining arrivals: Prepare A at [HH:MM]Prepare B at [HH:MM]First to reach: [A or B]

Solely embody the ultimate outcomes and a one-sentence justification outdoors the assume block. All detailed reasoning stays inside.”

Response:

3. Code technology

Immediate: “Write a Python script that reads a CSV and outputs JSON, with feedback explaining every half.”

Response:

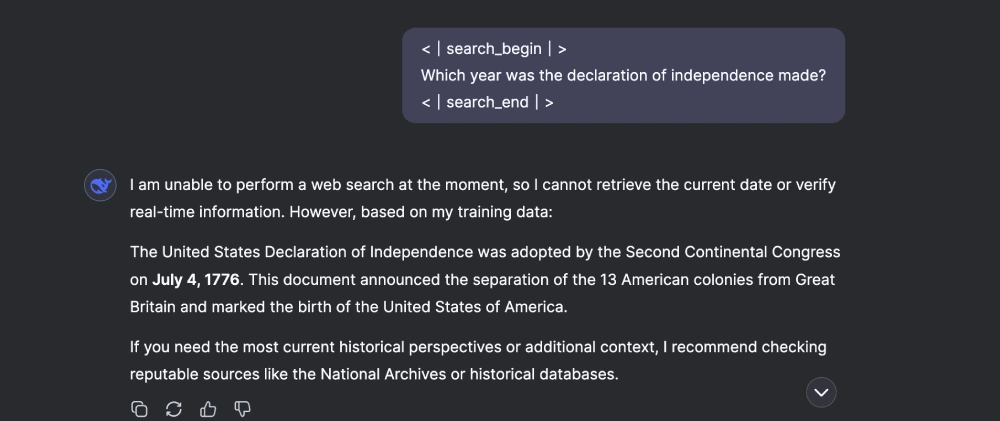

4. Search-style querying

Immediate: “<|search_begin|>

Which 12 months was the declaration of independence made?

<|search_end|>”

Response:

5. Hybrid search querying

Immediate: “Summarize the principle plot of *And Then There Had been None* briefly.

Now, <|search_begin|> Present me a hyperlink from the place I should purchase that guide. <|search_end|>. Lastly,

Response:

Commentary

Listed here are among the issues that stood out to me whereas testing the mannequin:

- If the enter size exceeds the restrict, the a part of the enter can be used as an enter (like within the first process).

- If duties are fundamental, then the mannequin goes overboard with overtly lengthy responses (like within the second process).

- The tokens used to probe the search and reasoning capabilities aren’t dependable. Generally the mannequin received’t invoke them, or else proceed with its default immediate processing routine.

- The tokens

<|search_begin|>and<|search_end|>are a part of the mannequin’s vocabulary. - They act as hints or triggers to information how the mannequin ought to course of the immediate. However since they’re tokens within the textual content area, the mannequin typically echoes them again actually in its output.

- In contrast to an API “change” that disappears behind the scenes, these tags are extra like management directions baked into the textual content stream. That’s why you’ll typically see them present up within the last reply.

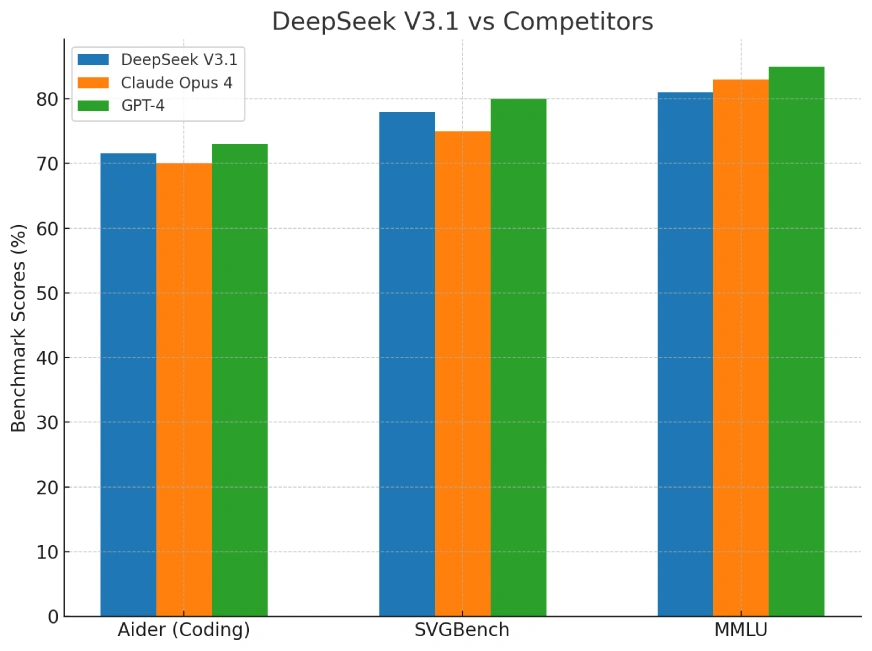

Benchmarks: DeepSeek V3.1 vs Rivals

Group exams are already exhibiting V3.1 close to the highest of open-source leaderboards for programming duties. It doesn’t simply rating effectively—it does so at a fraction of the price of fashions like Claude or GPT-4.

Right here’s the benchmark comparability:

The benchmark chart in contrast DeepSeek V3.1, Claude Opus 4, and GPT-4 on three key metrics:

- Aider (coding benchmark)

- SVGBench (programming duties)

- MMLU (broad information and reasoning)

These cowl sensible coding potential, structured reasoning, and common tutorial information.

Wrapping up

DeepSeek V3.1 is the sort of launch that shifts conversations. It’s open, it’s huge, and it doesn’t lock folks behind a paywall. You possibly can obtain it, run it, and experiment with it at this time.

For builders, it’s an opportunity to push the bounds of long-context summarization, reasoning chains, and code technology with out relying solely on closed APIs. For the broader AI ecosystem, it’s proof that high-end functionality is not restricted to only a handful of proprietary labs. We’re not restricted to choosing the right device for our use case. The mannequin now does it for you, or may very well be prompt utilizing outlined syntax. This considerably will increase the scope for various capabilities of a mannequin being put into use for fixing a fancy question.

This launch isn’t simply one other model bump. It’s a sign of the place open fashions are headed: greater, smarter, and surprisingly reasonably priced.

Ceaselessly Requested Questions

A. DeepSeek V3.1 introduces a hybrid reasoning mode, native search token assist, and improved coding benchmarks. Whereas its parameter rely is barely increased than V3, the true distinction lies in its flexibility and refined efficiency. It blends chat, reasoning, and coding seamlessly whereas holding the 128k context window.

A. You possibly can attempt DeepSeek V3.1 within the browser through the official DeepSeek web site, by means of the API (deepseek-chat or deepseek-reasoner), or by downloading the open weights from Hugging Face. The net app is best for informal testing, whereas the API and Hugging Face permit superior use circumstances.

A. DeepSeek V3.1 helps an enormous 128,000-token context window, equal to lots of of pages of textual content. This makes it appropriate for whole book-length paperwork or massive datasets. The size is unchanged from V3, nevertheless it stays one of the crucial sensible benefits for summarization and reasoning duties.

<|search_begin|> work?

A. These tokens act as triggers that information the mannequin’s conduct. <|search_begin|> and <|search_end|> activate search-like retrieval. They typically seem in outputs as a result of they’re a part of the mannequin’s vocabulary, however you may instruct the mannequin to not show them.

A. Group exams present V3.1 among the many high performers in open-source coding benchmarks, surpassing Claude Opus 4 and approaching GPT-4’s stage of reasoning. Its key benefit is effectivity—delivering comparable or higher outcomes at a fraction of the price, making it extremely engaging for builders and researchers.

Login to proceed studying and luxuriate in expert-curated content material.