Retrieval Augmented Technology (RAG) has grow to be a preferred method to harness LLMs for query answering utilizing your individual corpus of knowledge. Sometimes, the context to enhance the question that’s handed into the Massive Language Mannequin (LLM) to generate a solution comes from a database or search index containing your area information. When it’s a search index, the pattern is to make use of Vector search (HNSW ANN based mostly) over Lexical (BM25/TF-IDF based mostly) search, typically combining each Lexical and Vector searches into Hybrid search pipelines.

Up to now, I’ve labored on Data Graph (KG) backed entity search platforms, and noticed that for sure varieties of queries, they produce outcomes which can be superior / extra related in comparison with that produced from a typical lexical search platform. The GraphRAG framework from Microsoft Analysis describes a complete approach to leverage KG for RAG. GraphRAG helps produce higher high quality solutions within the following two conditions.

- the reply requires synthesizing insights from disparate items of data by means of their shared attributes

- the reply requires understanding summarized semantic ideas over a part of or all the corpus

The total GraphRAG method consists of constructing a KG out of the corpus, after which querying the ensuing KG to enhance the context in Retrieval Augmented Technology. In my case, I already had entry to a medical KG, so I targeted on constructing out the inference aspect. This publish describes what I needed to do to get that to work. It’s based mostly largely on the concepts described on this Data Graph RAG Question Engine web page from the LlamaIndex documentation.

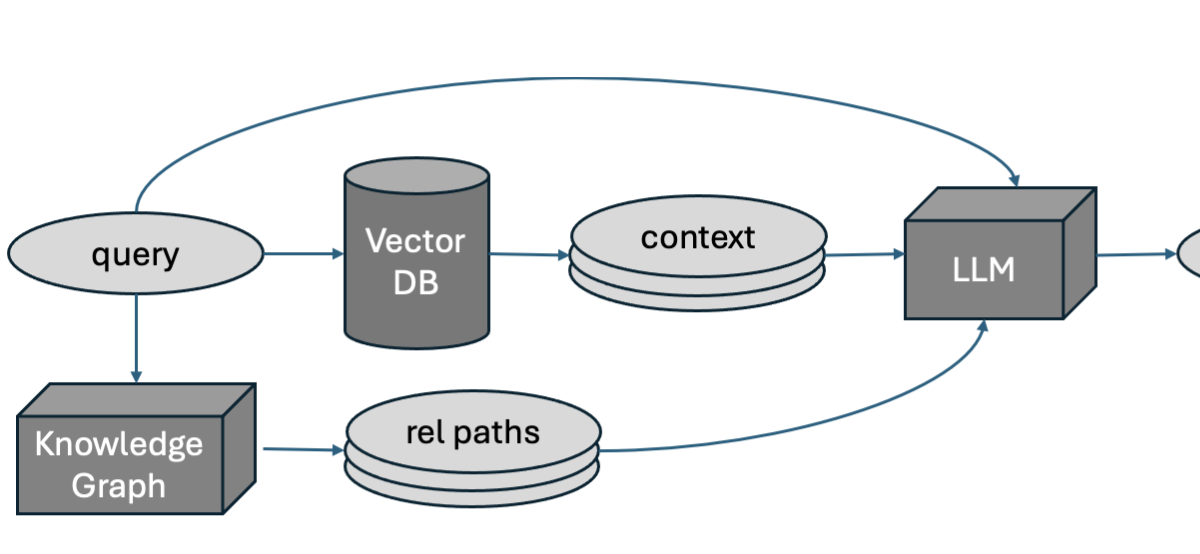

At a excessive degree, the thought is to extract entities from the query, after which question a KG with these entities to search out and extract relationship paths, single or multi-hop, between them. These relationship paths are used, along side context extracted from the search index, to enhance the question for RAG. The connection paths are the shortest paths between pairs of entities within the KG, and we solely take into account paths upto 2 hops in size (since longer paths are more likely to be much less attention-grabbing).

Our medical KG is saved in an Ontotext RDF retailer. I’m certain we will compute shortest paths in SPARQL (the usual question language for RDF) however Cypher appears easier for this use case, so I made a decision to dump out the nodes and relationships from the RDF retailer into flat information that seem like the next, after which add them to a Neo4j graph database utilizing neo4j-admin database import full.

1 2 3 4 5 6 7 8 9 |

# nodes.csv cid:ID,cfname,stygrp,:LABEL C8918738,Acholeplasma parvum,organism,Ent ... # relationships.csv :START_ID,:END_ID,:TYPE,relname,rank C2792057,C8429338,Rel,HAS_DRUG,7 ... |

The primary line in each CSV information are the headers that inform Neo4j concerning the schema. Right here our nodes are of sort Ent and relationships are of sort Rel, cid is an ID attribute that’s used to attach nodes, and the opposite parts are (scalar) attributes of every node. Entities had been extracted utilizing our Dictionary-based Named Entity Recognizer (NER) based mostly on the Aho-Corasick algorithm, and shortest paths are computed between every pair of entities (indicated by placeholders _LHS_ and _RHS_) extracted utilizing the next Cypher question.

1 2 |

MATCH p = allShortestPaths((a:Ent {cid:'_LHS_'})-[*..]-(b:Ent {cid:'_RHS_'})) RETURN p, size(p) |

Shortest paths returned by the Cypher question which can be greater than 2 hops lengthy are discarded, since these do not point out robust / helpful relationships between the entity pairs. The ensuing record of relationship paths are handed into the LLM together with the search consequence context to supply the reply.

We evaluated this implementation towards the baseline RAG pipeline (our pipeline minus the relation paths) utilizing the RAGAS metrics Reply Correctness and Reply Similarity. Reply Correctness measures the factual similarity between the bottom reality reply and the generated reply, and Reply Similarity measures the semantic similarity between these two parts. Our analysis set was a set of fifty queries the place the bottom reality was assigned by human area consultants. The LLM used to generate the reply was Claude-v2 from Anthropic whereas the one used for analysis was Claude-v3 (Sonnet). The desk beneath reveals the averaged Reply Correctness and Similarity over all 50 queries, for the Baseline and my GraphRAG pipeline respectively.

| Pipeline | Reply Correctness | Reply Similarity |

|---|---|---|

| Baseline | 0.417 | 0.403 |

| GraphRAG (inference) | 0.737 | 0.758 |

As you’ll be able to see, the efficiency acquire from utilizing the KG to enhance the question for RAG appears to be fairly spectacular. Since we have already got the KG and the NER obtainable from earlier tasks, it’s a very low effort addition to make to our pipeline. After all, we would wish to confirm these outcomes utilizing Additional human evaluations.

I lately got here throughout the paper Data Graph based mostly Thought: A Data Graph enhanced LLM Framework for pan-cancer Query Answering (Feng et al, 2024). In it, the authors establish 4 broad courses of triplet patterns that their questions (i.e, of their area) could be decomposed to, and addressed utilizing reasoning approaches backed by Data Graphs — One hop, Multi-hop, Intersection and Attribute issues. The concept is to make use of an LLM immediate to establish the entities and relationships within the query, then use an LLM to find out which of those templates ought to be used to handle the query and produce a solution. Relying on the trail chosen, an LLM is used to generate a Cypher question (an business commonplace question language for graph databases initially launched by Neo4j) to extract the lacking entities and relationships within the template and reply the query. An attention-grabbing future path for my GraphRAG implementation could be to include among the concepts from this paper.