Agentic AI techniques act as autonomous digital employees for performing advanced duties with minimal supervision. They’re presently rising with a speedy attraction, to the purpose that one estimate surmises that by 2025, 35% of corporations will implement AI brokers. Nonetheless, autonomy raises considerations in high-stakes even delicate errors in these fields can have critical penalties. Therefore, it makes individuals consider that human suggestions in Agentic AI ensures security, accountability, and belief.

The human-in-the-loop-validation (HITL) strategy is one collaborative design wherein people validate or affect an AI’s outputs. Human checkpoints catch errors earlier and maintain the system oriented towards human values, which in flip helps higher compliance and belief in direction of the agentic AI. It acts as a security web for advanced duties. On this article, we’ll evaluate workflows with and with out HITL as an instance these trade-offs.

Human-in-the-Loop: Idea and Advantages

Human-in-the-Loop (HITL) is a design sample the place an AI workflow explicitly contains human judgment at key factors. The AI could generate a provisional output and pause to let the human assessment, approve, or edit this output. In such a workflow, the human assessment step is interposed between the AI part and the ultimate output.

Advantages of Human Validation

- Error discount and accuracy: Human-in-the-loop will assessment the potential errors within the outputs supplied by the AI and can fine-tune the output.

- Belief and accountability: Human validation makes a system understandable and accountable in its choices.

- Compliance and security: Human interpretation of legal guidelines and ethics ensures AI actions conform to laws and questions of safety.

When NOT to Use Human-in-the-Loop

- Routine or high-volume duties: People are a bottleneck when pace issues. Externally, in such situations, the total automation technology is perhaps simpler.

- Time-critical techniques: Actual-time response can’t watch for human enter. For example, speedy content material filtering or stay alerts; HITL would possibly maintain the system again.

What Makes the Distinction: Evaluating Two Situations

With out Human-in-the-Loop

Within the totally automated situation, the agentic workflow proceeds autonomously. As quickly as enter is supplied, the agent generates content material and takes the motion. For instance, an AI assistant might, in some circumstances, submit a person’s time-off request with out confirming. This advantages from the best pace and potential scalability. After all, the draw back is that nothing is checked by a human. There’s a conceptual distinction between an error made by a Human and an error made by an AI Agent. An agent would possibly misread directions or carry out an undesired motion that might result in dangerous outcomes.

With Human-in-the-Loop

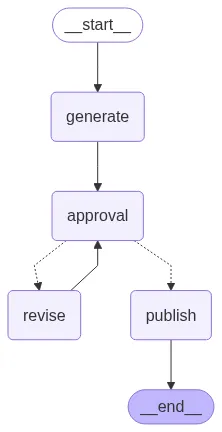

Within the HITL (human-in-the-loop) situation, we’re inserting a Human step. After producing a tough draft, the agent stops and asks an individual to approve or make adjustments to the draft. If the draft meets approval, the agent publishes the content material. If the draft will not be accredited, the agent revises the draft primarily based on suggestions and circles again. This situation provides a better diploma of accuracy and belief, since people can catch errors previous to finalizing. For instance, including a affirmation step shifts actions to cut back “unintentional” actions and confirms that the agent didn’t misunderstand enter. The draw back to this, in fact, is that it requires extra time and human effort.

Instance Implementation in LangGraph

Beneath is an instance utilizing LangGraph and GPT-4o-mini. We outline two workflows: one totally automated and one with a human approval step.

Situation 1: With out Human-in-the-Loop

So, within the first situation, we’ll create an agent with a easy workflow. It can take the person’s enter, like which matter we need to create the content material for or on which matter we need to write an article. After getting the person’s enter, the agent will use GPT-4o-Mini to generate the response.

from langgraph.graph import StateGraph, END

from typing import TypedDict

from openai import OpenAI

from dotenv import load_dotenv

import os

load_dotenv()

OPENAI_API_KEY = os.getenv("OPENAI_API_KEY")

# --- OpenAI shopper ---

shopper = OpenAI(api_key=OPENAI_API_KEY) # Change together with your key

# --- State Definition ---

class ArticleState(TypedDict):

draft: str

# --- Nodes ---

def generate_article(state: ArticleState):

immediate = "Write knowledgeable but participating 150-word article about Agentic AI."

response = shopper.chat.completions.create(

mannequin="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}]

)

state["draft"] = response.decisions[0].message.content material

print(f"n[Agent] Generated Article:n{state['draft']}n")

return state

def publish_article(state: ArticleState):

print(f"[System] Publishing Article:n{state['draft']}n")

return state

# --- Autonomous Workflow ---

def autonomous_workflow():

print("n=== Autonomous Publishing ===")

builder = StateGraph(ArticleState)

builder.add_node("generate", generate_article)

builder.add_node("publish", publish_article)

builder.set_entry_point("generate")

builder.add_edge("generate", "publish")

builder.add_edge("publish", END)

graph = builder.compile()

# Save diagram

with open("autonomous_workflow.png", "wb") as f:

f.write(graph.get_graph().draw_mermaid_png())

graph.invoke({"draft": ""})

if __name__ == "__main__":

autonomous_workflow()Code Implementation: This code units up a workflow with two nodes: generate_article and publish_articlelinked sequentially. When run, it has the agent print its draft after which publish it instantly.

Agent Workflow Diagram

Agent Response

“””Agentic AI refers to superior synthetic intelligence techniques that possess the flexibility to make autonomous choices primarily based on their setting and aims. In contrast to conventional AI, which depends closely on predefined algorithms and human enter, agentic AI can analyze advanced information, study from experiences, and adapt its habits accordingly. This know-how harnesses machine studying, pure language processing, and cognitive computing to carry out duties starting from managing provide chains to personalizing person experiences.

The potential functions of agentic AI are huge, reworking industries equivalent to healthcare, finance, and customer support. For example, in healthcare, agentic AI can analyze affected person information to supply tailor-made remedy suggestions, resulting in improved outcomes. As companies more and more undertake these autonomous techniques, moral concerns surrounding transparency, accountability, and job displacement turn out to be paramount. Embracing agentic AI provides alternatives to reinforce effectivity and innovation, but it surely additionally requires cautious contemplation of its societal influence. The way forward for AI is not only about automation; it is about clever collaboration.

”””

Situation 2: With Human-in-the-Loop

On this situation, first, we’ll create 2 instruments, revise_article_tool and publish_article_tool. The revise_article_tool will revise/change the article’s content material as per the person’s suggestions. As soon as the person is completed with the suggestions and happy with the agent response, simply by writing publish the 2nd device publish_article_toolit can get executed, and it’ll present the ultimate article content material.

from langgraph.graph import StateGraph, END

from typing import TypedDict, Literal

from openai import OpenAI

from dotenv import load_dotenv

import os

load_dotenv()

# --- OpenAI shopper ---

OPENAI_API_KEY = os.getenv("OPENAI_API_KEY")

shopper = OpenAI(api_key=OPENAI_API_KEY)

# --- State Definition ---

class ArticleState(TypedDict):

draft: str

accredited: bool

suggestions: str

selected_tool: str

# --- Instruments ---

def revise_article_tool(state: ArticleState):

"""Software to revise article primarily based on suggestions"""

immediate = f"Revise the next article primarily based on this suggestions: '{state['feedback']}'nnArticle:n{state['draft']}"

response = shopper.chat.completions.create(

mannequin="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}]

)

revised_content = response.decisions[0].message.content material

print(f"n[Tool: Revise] Revised Article:n{revised_content}n")

return revised_content

def publish_article_tool(state: ArticleState):

"""Software to publish the article"""

print(f"[Tool: Publish] Publishing Article:n{state['draft']}n")

print("Article efficiently revealed!")

return state['draft']

# --- Accessible Instruments Registry ---

AVAILABLE_TOOLS = {

"revise": revise_article_tool,

"publish": publish_article_tool

}

# --- Nodes ---

def generate_article(state: ArticleState):

immediate = "Write knowledgeable but participating 150-word article about Agentic AI."

response = shopper.chat.completions.create(

mannequin="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}]

)

state["draft"] = response.decisions[0].message.content material

print(f"n[Agent] Generated Article:n{state['draft']}n")

return state

def human_approval_and_tool_selection(state: ArticleState):

"""Human validates and selects which device to make use of"""

print("Accessible actions:")

print("1. Publish the article (sort 'publish')")

print("2. Revise the article (sort 'revise')")

print("3. Reject and supply suggestions (sort 'suggestions')")

determination = enter("nWhat would you love to do? ").strip().decrease()

if determination == "publish":

state["approved"] = True

state["selected_tool"] = "publish"

print("Human validated: PUBLISH device chosen")

elif determination == "revise":

state["approved"] = False

state["selected_tool"] = "revise"

state["feedback"] = enter("Please present suggestions for revision: ").strip()

print(f"Human validated: REVISE device chosen with suggestions")

elif determination == "suggestions":

state["approved"] = False

state["selected_tool"] = "revise"

state["feedback"] = enter("Please present suggestions for revision: ").strip()

print(f"Human validated: REVISE device chosen with suggestions")

else:

print("Invalid enter. Defaulting to revision...")

state["approved"] = False

state["selected_tool"] = "revise"

state["feedback"] = enter("Please present suggestions for revision: ").strip()

return state

def execute_validated_tool(state: ArticleState):

"""Execute the human-validated device"""

tool_name = state["selected_tool"]

if tool_name in AVAILABLE_TOOLS:

print(f"n Executing validated device: {tool_name.higher()}")

tool_function = AVAILABLE_TOOLS[tool_name]

if tool_name == "revise":

# Replace the draft with revised content material

state["draft"] = tool_function(state)

# Reset approval standing for subsequent iteration

state["approved"] = False

state["selected_tool"] = ""

elif tool_name == "publish":

# Execute publish device

tool_function(state)

state["approved"] = True

else:

print(f"Error: Software '{tool_name}' not present in obtainable instruments")

return state

# --- Workflow Routing Logic ---

def route_after_tool_execution(state: ArticleState) -> Literal["approval", "end"]:

"""Route primarily based on whether or not the article was revealed or wants extra approval"""

if state["selected_tool"] == "publish":

return "finish"

else:

return "approval"

# --- HITL Workflow ---

def hitl_workflow():

print("n=== Human-in-the-Loop Publishing with Software Validation ===")

builder = StateGraph(ArticleState)

# Add nodes

builder.add_node("generate", generate_article)

builder.add_node("approval", human_approval_and_tool_selection)

builder.add_node("execute_tool", execute_validated_tool)

# Set entry level

builder.set_entry_point("generate")

# Add edges

builder.add_edge("generate", "approval")

builder.add_edge("approval", "execute_tool")

# Add conditional edges after device execution

builder.add_conditional_edges(

"execute_tool",

route_after_tool_execution,

{"approval": "approval", "finish": END}

)

# Compile graph

graph = builder.compile()

# Save diagram

strive:

with open("hitl_workflow_with_tools.png", "wb") as f:

f.write(graph.get_graph().draw_mermaid_png())

print("Workflow diagram saved as 'hitl_workflow_with_tools.png'")

besides Exception as e:

print(f"Couldn't save diagram: {e}")

# Execute workflow

initial_state = {

"draft": "",

"accredited": False,

"suggestions": "",

"selected_tool": ""

}

graph.invoke(initial_state)

if __name__ == "__main__":

hitl_workflow()

"""Human Suggestions:

Hold the dialogue large and easy in order that each tech and non-tech individuals can perceive

"""Agent Workflow Diagram

Agent Response

“””Understanding Agentic AI: The Way forward for Clever Help Agentic AI represents a groundbreaking development within the subject of synthetic intelligence, characterised by its capacity to function independently whereas exhibiting goal-directed habits. In contrast to conventional AI techniques that require fixed human intervention, Agentic AI can analyze information, make choices, and execute duties autonomously. This revolutionary know-how has the potential to rework varied sectors, together with healthcare, finance, and customer support, by streamlining processes and enhancing effectivity. Probably the most notable options of Agentic AI is its adaptability; it learns from interactions and outcomes, repeatedly bettering its efficiency. As extra companies undertake this know-how, the alternatives for personalised person experiences and superior predictive analytics increase considerably. Nonetheless, the rise of Agentic AI additionally raises essential discussions about ethics, accountability, and safety. Placing the fitting steadiness between leveraging its capabilities and guaranteeing accountable utilization might be essential as we navigate this new period of clever automation. Embracing Agentic AI might essentially change our interactions with know-how, in the end enriching our day by day lives and reshaping industries. Article efficiently revealed!

”””

Observations

This demonstration mirrored widespread HITL outcomes. With human assessment, the ultimate article was clearer and extra correct, per findings that HITL improves AI output high quality. Human suggestions eliminated errors and refined phrasing, confirming these advantages. In the meantime, every assessment cycle added latency and workload. The automated run completed practically immediately, whereas the HITL workflow paused twice for suggestions. In follow, this trade-off is anticipated: machines present pace, however people present precision.

Conclusion

In conclusion, human suggestions might considerably enhance agentic AI output. It acts as a security web for errors and might maintain outputs aligned with human intent. On this article, we highlighted that even a easy assessment step improved textual content reliability. The choice to make use of HITL ought to in the end be primarily based on context: you must use human assessment in essential circumstances and let it go in routine conditions.

As using agentic AI will increase, the problem of when to make use of automated processes versus utilizing oversight of these processes turns into extra essential. Rules and greatest practices are more and more requiring some stage of human assessment in high-risk AI implementations. The general concept is to make use of automation for its effectivity, however nonetheless have human beings take possession of key choices taken as soon as a day! Versatile human checkpoints will assist us to make use of agentic AI we will safely and responsibly.

Learn extra: The right way to get into Agentic AI in 2025?

Often Requested Questions

A. HITL is a design the place people validate AI outputs at key factors. It ensures accuracy, security, and alignment with human values by including assessment steps earlier than last actions.

A. HITL is unsuitable for routine, high-volume, or time-critical duties the place human intervention slows efficiency, equivalent to stay alerts or real-time content material filtering.

A. Human suggestions reduces errors, ensures compliance with legal guidelines and ethics, and builds belief and accountability in AI decision-making.

A. With out HITL, AI acts autonomously with pace however dangers unchecked errors. With HITL, people assessment drafts, bettering reliability however including effort and time.

A. Oversight ensures that AI actions stay protected, moral, and aligned with human intent, particularly in high-stakes functions the place errors have critical penalties.

Login to proceed studying and revel in expert-curated content material.